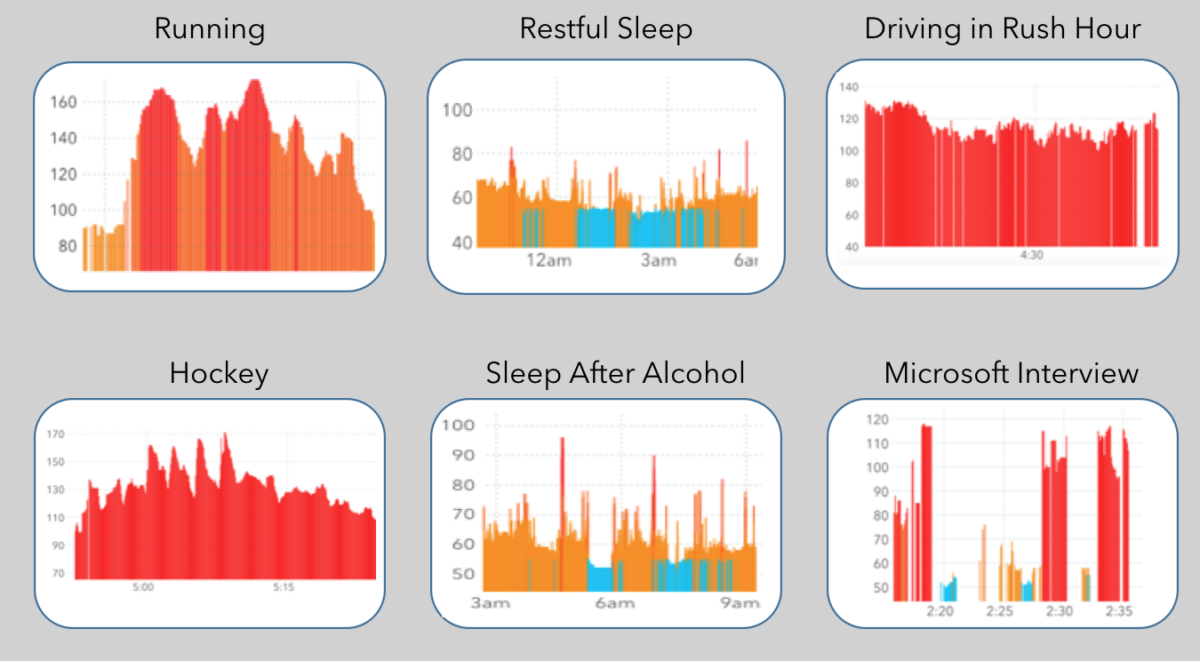

Your heart says a lot about you. Workouts, nights of restful and restless sleep, and stress each have distinct heart rate signatures.

1 in 4 Americans now owns a wearable device. At Cardiogram, we’re using this abundance of data to screen for cardiovascular diseases. Today, in collaboration with our research partners at UC San Francisco (UCSF), we released the first clinically validated paper testing the accuracy of our screening algorithm: Passive Detection of Atrial Fibrillation Using a Commercially Available Smartwatch.

Can a watch save your life?

Atrial fibrillation is a silent killer. The heart arrhythmia causes more life-threatening strokes than any other chronic condition, and will affect 1 in 4 of us. But the sad fact is that atrial fibrillation often goes unnoticed: It is estimated that 40% of those who experience the heart condition are completely unaware of it. And even those who notice the arrhythmia often go undiagnosed, as the symptoms may dissipate before they get to a cardiologist.

To effectively screen for this disease, we need a continuous heart rate monitor that is worn not just by the sick, but also by those who don’t suspect anything is wrong. We need to use devices that people are already wearing: Apple Watch, Garmin, TicWatch, and so on.

Two years ago, Cardiogram and UCSF created the mRhythm study to collect and analyze user heart rate data. We’ve trained DeepHeart, our deep learning architecture, on 139 million heart rate measurements contributed by 9,750 users. Our results show that DeepHeart can detect atrial fibrillation with accuracies higher than FDA-cleared wearable ECG devices. For technical details on DeepHeart’s architecture, read DeepHeart: Semi-Supervised Sequence Learning for Cardiovascular Risk Prediction, which we presented this year at The Thirty-Second AAAI Conference on Artificial Intelligence (AAAI-18).

Accuracy on an in-hospital validation set

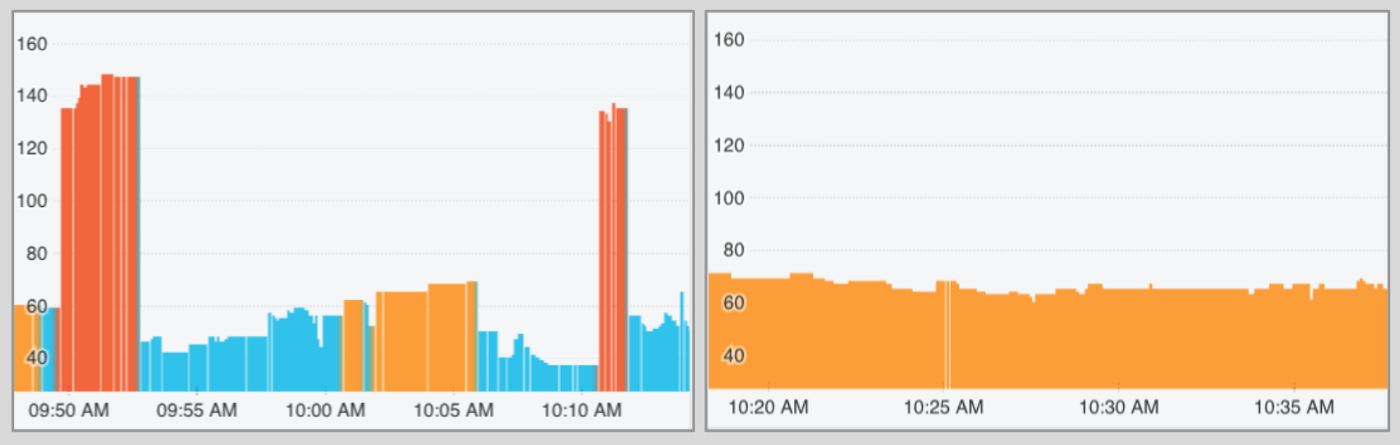

Your heart is controlled by electrical signals that trigger contractions. In those suffering from atrial fibrillation, these signals are chaotic and irregular, causing your heart rate to rapidly change.

If caught, this irregular heart rhythm can be stopped using a medical procedure called a cardioversion. You can think of this as a shock that resets your heart to normal sinus rhythm.

Over the last year, patients entering UCSF for this procedure were asked to participate in our study. For the 51 patients who agreed, a research coordinator placed an Apple Watch on their wrist, which continuously recorded data before, during, and after the procedure.

We split each patient’s data into segments, one before and one after the procedure. We then ran DeepHeart on each segment, and found that it achieved a c-statistic of 97% (95% confidence interval: 94%-100%).

What exactly does 97% c-statistic mean?

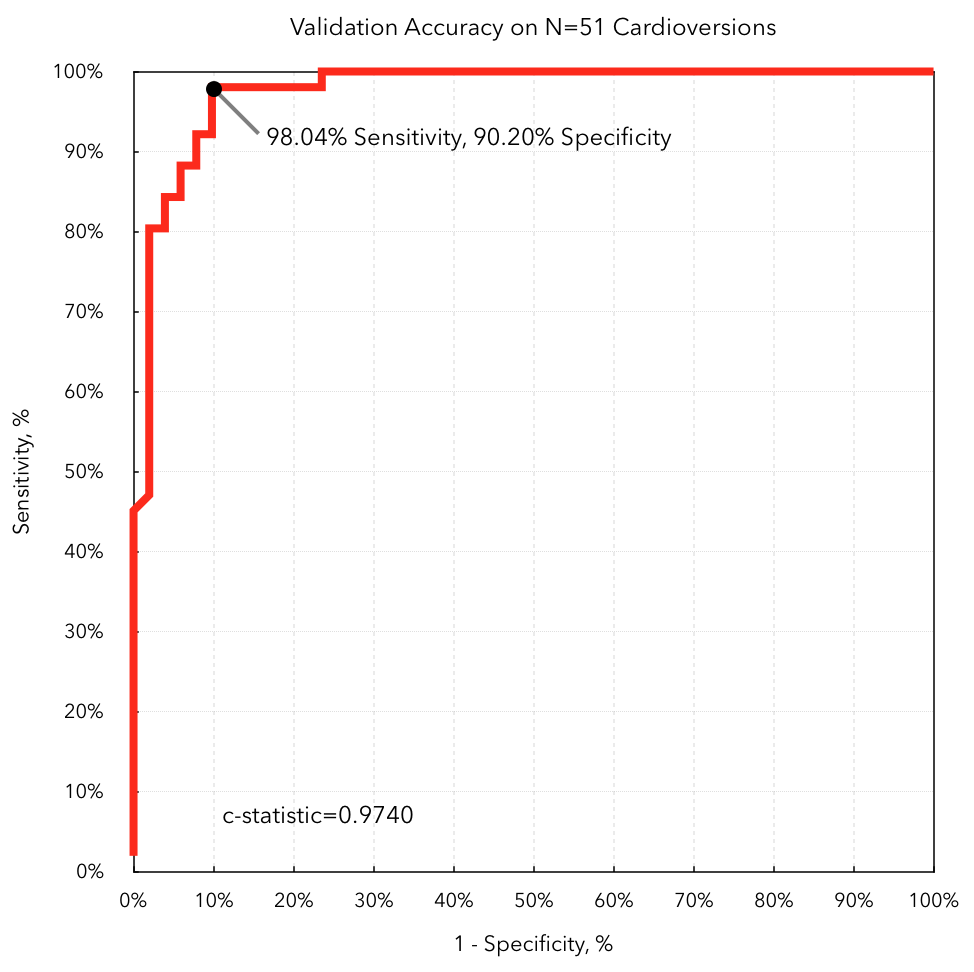

97% is our c-statistic, also known as area under the receiver operating characteristic (ROC) curve. Anyone not familiar with statistics or machine learning will be wondering: What does a 97% c-statistic mean?

When a doctor tests you for a disease, she will ultimately say that you have, or do not have, the condition. DeepHeart works a bit differently: It returns back a number between 0 and 1 that indicates your risk for the disease. Then a human will decide on a cutoff point, say 0.9, where all patients with scores greater than 0.9 are screened positive, and the rest negative.

In reporting the accuracy of a binary classification algorithm (eg whether or not you have atrial fibrillation), we would like to remain agnostic to the choice of this parameter. We use a common measurement known as a c-statistic, which measures the area under the curve generated by various cut-off points. Higher is better, and a random classifier will have a c-statistic of 50%.

To deploy DeepHeart to Cardiogram users outside of the mRhythm study, we’ll choose a cutoff point, above which a user will be notified that he’s likely experiencing atrial fibrillation. The cutoff point marked in the figure above gives us a sensitivity (true positive rate) of 98.04%, and a specificity (true negative rate) of 90.2%.

Surprisingly, our method outperforms an ECG device recently cleared by the FDA, which reported 93% sensitivity and 84% specificity in the same setting: a validation set of patients receiving cardioversions in-hospital.

Development of a Low-Data Deep Neural Network

Deep Learning is data hungry, but in medicine every label is a human life at risk.

AlexNet, a groundbreaking convolutional neural network for image recognition, was trained on 1.6 million images. Compare this to two recent advances in deep learning for medicine: Google’s diabetic retinopathy algorithm and Stanford’s skin cancer detection network, each trained on about 130 thousand labels, an order of magnitude fewer.

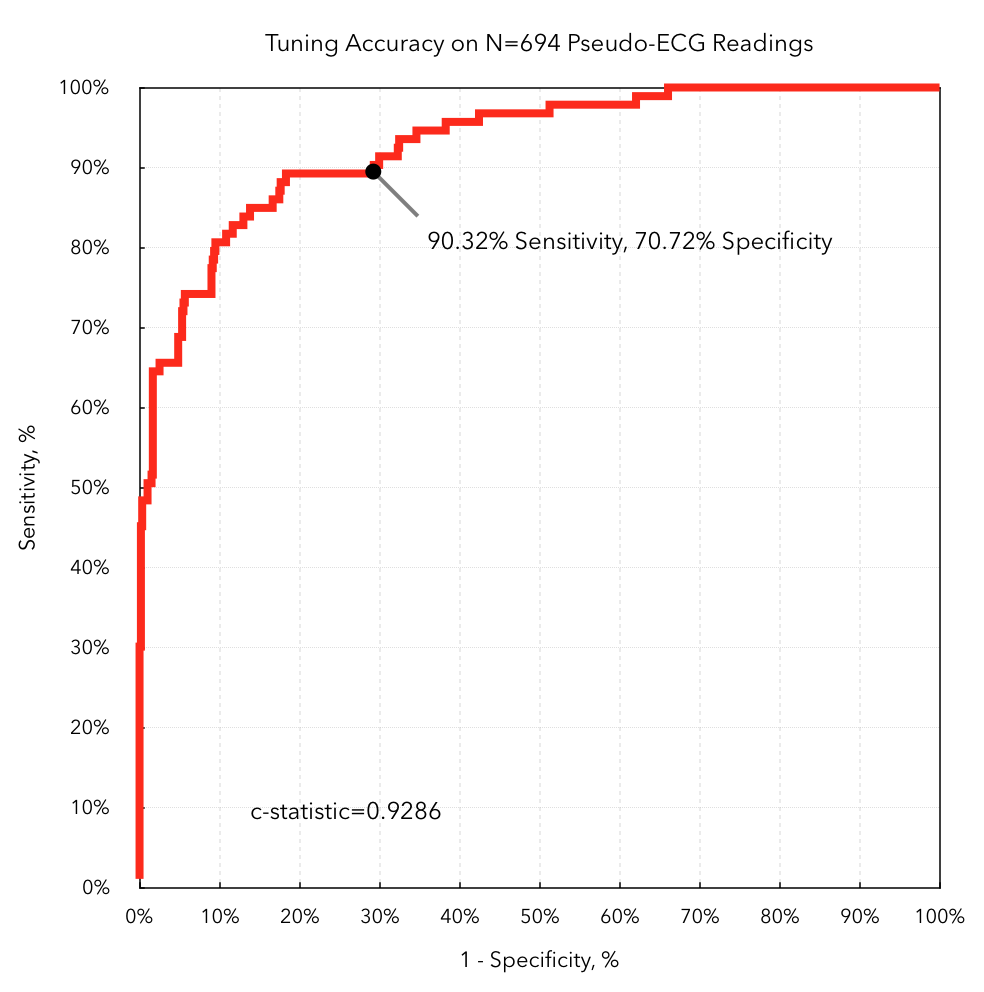

DeepHeart was trained on data from 9,750 users, another order of magnitude lower than these datasets. To obtain labeled data, we mailed 200 Cardiogram users mobile ECG devices that, when activated, would detect atrial fibrillation. We split these users into training and tuning sets.

Training deep neural nets on small datasets is an area of active research. At Cardiogram, we have an abundance of unlabeled user data, and applied unsupervised training to boost the accuracy of our model.

In one attempt, we pretrained the model with a noisy auto-encoder: Given an input of user data with gaussian noise, predict the true, unadulterated data. The learned model weights were then used as initialization parameters in supervised training.

Our second method of unsupervised pretraining outperformed the first. Here, we pretrained the network to predict a set of hand-engineered features inspired by the medical literature: average absolute difference between successive heart rate measurements in window sizes of 5 seconds, 30 seconds, 5 minutes, and 30 minutes.

This model obtained a 93% c-statistic (95% confidence interval: 91%-95%) on the tuning set.

Model performance in the real world

DeepHeart has high accuracy on detecting atrial fibrillation in a hospital environment. The real world, however, is very different from a hospital bed. Motion, sweat, and sunscreen can cause inaccurate optical heart rate readings. Alcohol consumption and exercise can mask or be mistaken for arrhythmias. The task of detecting atrial fibrillation is much harder.

One measure of real world performance is discussed in the previous section: tuning accuracy on pseudo-ECG labels. In another branch of the experiment, DeepHeart was tasked with predicting self-reported persistent atrial fibrillation. This presented a more challenging task because the labels were not verified by an ECG, and so are less accurate. Furthermore, the task here is to predict users who suffer from atrial fibrillation, rather than to predict episodes of atrial fibrillation.

DeepHeart obtained a c-statistic of 71% (CI 0.64–0.78) on this validation set. This number demonstrates that DeepHeart is able to perform in a real world environment. The drop in c-statistic from 97% (Cardioversions) and 93% (Mobile ECG Tuning Set) to 71% is explained in part by imprecise labels: A patient may self-report atrial fibrillation even when he is not currently experiencing an episode.

What's next?

In February of this year, we presented early results at the Association for the Advancement of Artificial Intelligence demonstrating that DeepHeart can predict diabetes with a c-statistic of 85%, high blood pressure at 81%, and sleep apnea at 83%. These results indicate that wearable devices can be used for large-scale, low-cost disease screening.

Imagine a world where diabetes can be caught early and reversed through behavioral change, where physicians are empowered by algorithms continuously analyzing troves of user data, and where everyone can benefit from low cost, non-invasive disease screening.

Want to help us build this world? If you’re a self-insured employer, healthcare payer, or healthcare provider, email us at [email protected]. And if you’re an experienced frontend engineer, drop us a note at [email protected].