Why is the world’s most advanced AI used for cat videos, but not to help us live longer and healthier lives? A brief history of AI in Medicine, and the factors that may help it succeed where it has failed before.

Imagine yourself as a young graduate student in Stanford’s Artificial Intelligence lab, building a system to diagnose a common infectious disease. After years of sweat and toil, the day comes for the test: a head-to-head comparison with five of the top human experts in infectious disease. Over the first expert, your system squeezes a narrow victory, winning by just 4%. It beats the second, third, and fourth doctors handily. Against the fifth, it wins by an astounding 52%.

Would you believe such a system exists already? Would you believe it existed in 1979? This was the MYCIN project, and in spite of the excellent research results, it never made its way into clinical practice. [1]

In fact, although we’re surrounded by fantastic applications of modern AI, particularly deep learning — self-driving cars, Siri, AlphaGo, Google Translate, computer vision — the effect on medicine has been nearly nonexistent. In the top cardiology journal, Circulation, the term “deep learning” appears only twice [2]. Deep learning has never been mentioned in the New England Journal of Medicine, The Lancet, BMJ, or even JAMA, where the work on MYCIN was published 37 years ago. What happened?

There are three central challenges that have plagued past efforts to use artificial intelligence in medicine: the label problem, the deployment problem, and fear around regulation. Before we get in to those, let’s take a quick look at the state of medicine today.

The Moral Case for AI in Healthcare

Medicine is life and death. With such high stakes, one could ask: should we really be rocking the boat here? Why not just stick with existing, proven, clinical-grade algorithms?

Well, consider a few examples of the status quo:

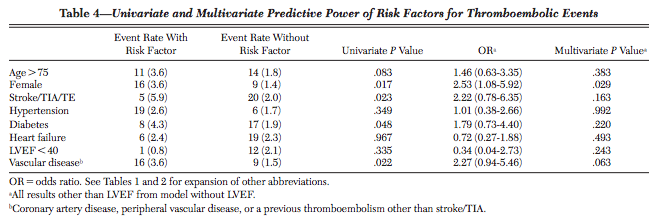

- The score that doctors use to prescribe your grandparents blood thinners, CHA2DS2-VASc, is accurate only 67% of the time. It was derived from a cohort that included only 25 people with strokes; out of 8 tested predictor variables, only one was statistically significant. [3]

- Google updates its search algorithm 550 times per year, but life-saving devices like ICDs are still programmed using simple thresholds — if heart rate exceeds X, SHOCK — and their accuracy is getting worse over time.

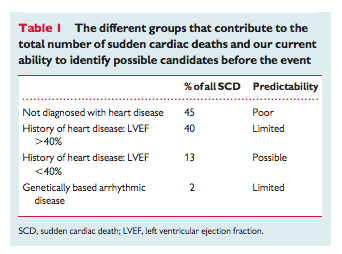

- Although cardiologists have invented a life-saving treatment for sudden cardiac death, our current algorithm to identify who needs that treatment will miss 250,000 out of the 300,000 people who will die suddenly this year. [4].

- Pressed for time, doctors can’t make sense of all the raw data being generated: “Most primary care doctors I know, if they get one more piece of data, they’re going to quit.”

To be clear, none of this means medical researchers are doing a bad job. Modern medicine is a miracle; it’s just unevenly-distributed. Computer scientists can bring much to the table here. With tools like Apple’s ResearchKit and Google Fit, we can collect health data at scale; with deep learning, we can translate large volumes of raw data into insights that help both clinicians and patients take real actions.

To do that, we must solve three hard problems: one technical, one a matter of political economy, and one regulatory. The good news is that each category has new developments that may let AI succeed where it has failed before.

Central Problem #1: Healthcare as a Label Desert and One-Shot Learning

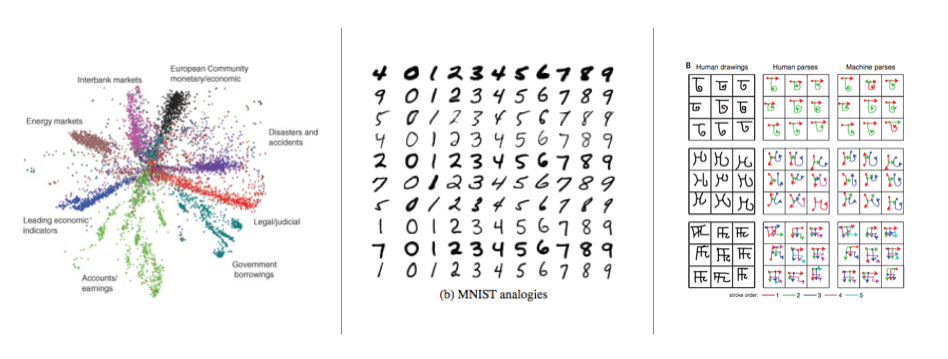

Modern artificial intelligence is data-hungry. To make speech recognition on your Android phone accurate, Google trains a deep neural network on roughly 10,000 hours of annotated speech. In computer vision, ImageNet contains more than 1,034,908 hand-annotated images. These annotations, called labels, are essential to make techniques like deep learning work.

In medicine, each label represents a human life at risk.

For example, in our study with UCSF Cardiology, labeled examples come from people visiting the hospital for a procedure called cardioversion, a 400-joule electric shock to the chest that resets your heart rhythm. It can be a scary experience to go through. Many of these patients are gracious enough to wear a heart rate sensor (e.g., an Apple Watch) during the whole procedure in the hope of making life better for the next generation, but we know we’ll never get one million participants, and it would be unconscionable to ask.

Central Problem #2: Deployment and the Outside-In Principle

Let’s say you’ve built a breakthrough algorithm. What happens next? The experience of MYCIN showed that research results aren’t enough; you need a path to deployment.

Historically, deployment has been difficult in fields like healthcare, education, and government. Electronic Medical Records have no real equivalent to an “App Store” that would let an individual doctor install a new algorithm [8]. EMR software is generally installed on-premise, sold in multi-year cycles to a centralized procurement team within each hospital system, and new features are driven by federally-mandated checklists. To get a new innovation through a heavyweight procurement process, you need a clear ROI. Unfortunately, hospitals tend to prioritize what they can bill for, which brings us to our ossified and dirigiste payment system, fee-for-service. Under fee-for-service, the hospital bills for each individual activity; in the case of a misdiagnosis, for example, they may perform and bill for follow-up tests. Perversely, that means a better algorithm may actually reduce the revenue of the hospitals expected to adopt it. How on earth would that ever fly?

Even worse, since a fee is specified for each individual service, innovations are non-billable by default. While in the abstract, a life-saving algorithm such as MYCIN should be adopted broadly, when you map out the concrete financial incentives, there’s no realistic path to deployment.

Fortunately, there is a change we can believe in. First, we don’t “ship” software anymore, we deploy it instantly. Second, the Affordable Care Act creates the ability for startups to own risk end-to-end: full-stack startups for healthcare.

First, shipping. Imaging yourself as an AI researcher building Microsoft Word’s spell checker in the 90’s. Your algorithm would be limited to whatever data you could collect in the lab; if you discovered a breakthrough, it would take years to ship. Now imagine yourself ten years later, working on spelling correction at Google. You can build your algorithm based on billions of search queries, clicks, page views, web pages, and links. Once it’s ready, you can deploy it instantly. That leads to a 100x faster feedback loop. More than any one algorithmic advance, systems like Google search perform so well because of this fast feedback loop.

The same thing is quietly becoming possible in medicine. We all have a supercomputer in our pocket. If you can find a way to package up artificial intelligence within an iOS or Android app, the deployment process shifts from an enterprise sales cycle to an app store update.

Second, the unbundling of risk. The Affordable Care Act has created alternatives to fee-for-service. For example, in bundled payments, Medicare pays a fixed fee for a surgery, and if the patient needs to be re-hospitalized within 90 days, the original provider is on the hook financially for the cost. That flips the incentives: if you invent a new AI algorithm 10% better at predicting risk (or better, preventing it), that now drives the bottom line for a hospital. There are many variants of fee-for-value being tested now: Accountable Care Organizations, risk-based contracting, full capitation, MACRA and MIPS, and more.

These two things enable outside-in approaches to healthcare: build up a user base outside the core of the healthcare system (e.g., outside the EMR), but take on risk for core problems within the healthcare system, such as re-hospitalizations. Together, these two factors let startups solve problems end-to-end, much the same way Uber solved transportation end-to-end rather than trying to sell software to taxi companies.

Only Partially a Problem: Regulation and Fear

Many entrepreneurs and researchers fear healthcare because it’s highly-regulated. The perception is that many regulatory regimes are just an expensive way to say “no” to new ideas.

And that perception is sometimes true: Certificates of Need, risk-based capital requirements, over-burdensome reporting, fee-for-service: these things sometimes create major barriers to new entrants and innovations, largely to our collective detriment.

But regulations can also be your ally. Take HIPAA. If the authors of MYCIN wanted to make it possible to run their algorithm on your medical record in 1978, there was really no way to do that. The medical record was owned by the hospital, not the patient. HIPAA, passed in 1996, flipped the ownership model: if the patient gives consent, the hospital is required to send the record to the patient or a designee. Today those records are sometimes faxes of paper copies, but efforts like Blue Button, FIHR, and meaningful use are moving them toward machine-readable formats. As my friend Ryan Panchadsaram says, HIPAA often says you can.

Closing Thoughts

If you’re a skilled AI practitioner currently sitting on the sidelines, now is your time to act. The problems that have kept AI out of healthcare for the last 40 years are now solvable. And your impact is large.

Modern research has become so specialized that our notion of impact is sometimes siloed. A world-class clinician may be rewarded for inventing a new surgery; an AI researcher may get credit for beating the world record on MNIST. When two fields cross, there can sometimes be fear, misunderstanding, or culture clashes.

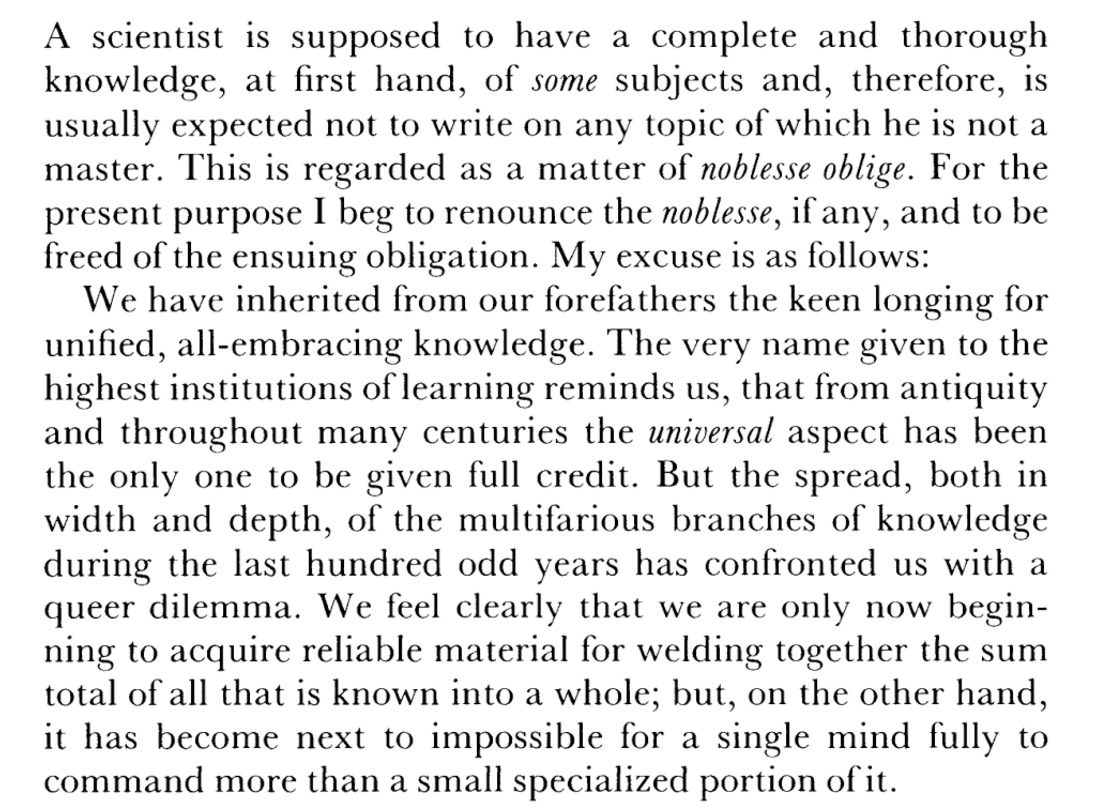

We’re not unique in history. In 1944, the foundations of quantum physics had been laid, including, dramatically, the later detonation of the first atomic bomb. After the war, a generation of physicists turned their attention to biology. In the 1944 book What is Life?, Erwin Schrödinger referred to a sense of noblesse oblige that prevented researchers in disparate fields from collaborating deeply, and “beg[ged] to renounce the noblesse”:

Over the next 20 years, the field of molecular biology unfolded. Schrödinger himself used quantum mechanics to predict that our genetic material had the structure of an “aperiodic crystal.” Meanwhile, Luria and DelBrück (an M.D. and a physics PhD, respectively) discovered the genetic mechanism by which viruses replicate. The next decade, Watson (a biologist) and Crick (a physicist) applied x-rays from Rosalind Franklin (a chemist) to discover the double-helix structure of DNA. Both Luria & DelBrück and Watson & Crick would go on to win Nobel Prizes for those interdisciplinary collaborations. (Franklin herself had passed away by the time the latter prize was awarded.)

If AI in medicine were a hundredth as successful as physics was in biology, the impact would be astronomical. To return to the example of CHADS2-Vasc, there are about 21 million people on blood thinners worldwide; if a third of those don’t need it, then we’re causing 266,000 extra brain hemorrhages [7]. And that’s just one score for one disease. But we can only solve these problems if we beg to renounce the noblesse, as generations of scientists did before us. Lives are at stake.

Notes

[1] An interesting snapshot of history can be found in the 1982 book Artificial Intelligence in Medicine, long out of print. The author’s own exasperated reflection is that many of the ideas from the 80’s are still vibrant, but “medical record systems have moved toward routine adoption so slowly that the authors would have been shocked in 1982 to discover that many of the ideas we described are still immensely difficult to apply in practice because the data they rely on are not normally available in machine-readable form.” A more recent survey is Thirty years of artificial intelligence in medicine (AIME) conferences: A review of research themes (2015).

[2] Machine Learning in Medicine (Circulation, 2015) is a good summary, particularly the last section on the relationship between precision medicine and layers of representation in deep learning:

[3] The c-statistic is also equivalent to the area under the ROC curve. Original source for CHADS2-Vasc: Lip 2010.

[4] From Risk stratification for sudden cardiac death: current

status and challenges for the future and Risk Stratification for Sudden Cardiac Death: A Plan for the Future.

[5] That’s not to imply the app is just a front for research: we care deeply that Cardiogram stands alone as an engaging, well-designed, and useful app. But deciding to build an app in the first place was driven by a broader purpose. As one doctor put it, “If you want to do world-class sociology research, build Facebook.”

[6] http://venturebeat.com/2015/12/19/5-must-dos-for-succeeding-in-health-tech/

[7] Warfarin causes brain hemorrhage in 38 out of every 1000 patients.

[8] A potential future exception: Illumina’s Helix. See Chrissy Farr’s piece in Fast Company on Illumina’s ambition to create an app store for your genome.